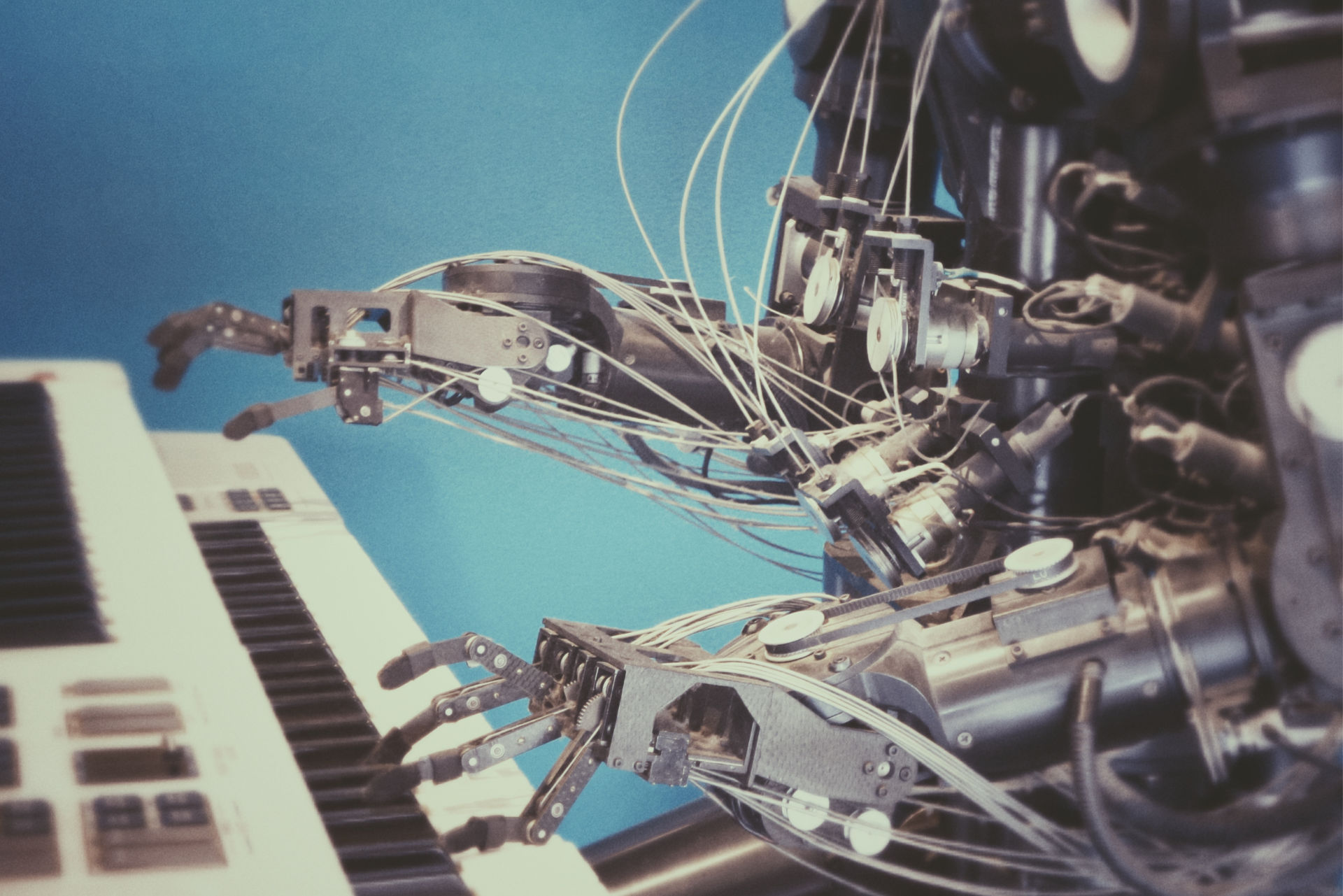

You’ve definitely heard of AI and machine learning. You’ve likely heard how it’s significantly changing the marketing landscape, but if not, here’s a stat from Forbes:

84% of marketing organizations are implementing or expanding AI and machine learning in 2018. The technology has seemingly endless applications, all promising to make marketing easier and more effective.

But, don’t fire all your human employees just yet.

In our endless pursuit of knowledge, we came across an article by sociologist Polina Aronson and journalist Judith Duportail that was enlightening, to say the least. While the focus was on the use of technology in emotional regulation, there’s certainly a takeaway in there for us (more on that later). Here’s the gist.

As technology advances, our devices are beginning to function as personal assistants in many ways, including the areas of mental health and emotional regulation. Siri and Alexa have been promoted from assistant to therapist, offering cheery advice when you’re feeling down; productivity and mindfulness programs; apps aimed at tackling depression and anxiety — even pulse monitoring to ensure your mental stress and strain doesn’t affect you physically.

What ties it all together is that the AI coded into your device deploys to provide you a human-esque helper. AI uses machine learning to inform the personality and conversational style of these bots. Machine learning, according to Wikipedia, “uses statistical techniques to give computer systems the ability to “learn” (e.g., progressively improve performance on a specific task) from data, without being explicitly programmed.”

The inherent problem, then, becomes the data itself. As Aronson and Duportail put it, “…because these algorithms learn from the most statistically relevant bits of data, they tend to reproduce what’s going around the most, not what’s true or useful or beautiful. As a result, when the human supervision is inadequate, chatbots left to roam the internet are prone to start spouting the worst kinds of slurs and clichés.”

Imagine your therapist did all the requisite schooling, but some ignorant troll yelled louder than all the professors, so now your therapist doles out advice based on what she heard, not what was correct. This is the fatal flaw in machine learning — humanity. Sounds like an episode of Black Mirror, right?

All is not lost, however, and the solution is ironic and simple. Human management is required to ensure AI hears the professors and not the trolls. This must be applied across the board, and our industry is no exception. While machine learning is poised to change the game for marketers — in the areas of search, recommendation engines, programmatic advertising, marketing forecasting, conversational commerce, customer segmentation and even content generation — we still need good people to monitor and course correct our well-meaning bots.

Takeaway: The hype is real and AI offers marketers an opportunity to engage our audience in ways unimaginable even 10 years ago. It’s not without its flaws, however, and works best in conjunction with human insight and oversight.